Developer AWS Accounts

Use your individual AWS account under the Sage consolidated bill for AWS experiments. The rule of thumb is that if you cannot shut off what ever you are running while you are on vacation, it belongs in the Production AWS Account.

Production AWS Account

Use your IAM Account for:

- S3

- EC2

- Elastic MapReduce (command line access only right now)

- Log into the AWS console with you IAM login and password: https://325565585839.signin.aws.amazon.com/console/ec2

Use the platform@sagebase.org Account for:

- Elastic Beanstalk

- console usage of Elastic MapReduce

- Log into the AWS console with the platform@sagebase.org username and password (get it from Nicole): https://console.aws.amazon.com/

First time accessing the console

- Get your IAM credentials

- from Nicole

- or from the backup copy on

fremont:/home/ndeflaux/PlatformIAMCreds(if you have the Sage root password)

- Then either

- Tell Nicole what password you want

- Or set your password yourself

- Install the IAM tools on your machine http://docs.amazonwebservices.com/IAM/latest/GettingStartedGuide/index.html?GetTools.html

- Create a password for yourself (there is currently no way to change your password via the console UI)

iam-useraddloginprofile -u YOU -p aDecentPassword

How To

Figure out if AWS is broken

AWS occasionally has issues. To figure out whether the problem you are currently experiencing is their fault or not:

- Check the AWS status console to see if they are reporting any problems http://status.aws.amazon.com/

- Check the most recent messages on the forums https://forums.aws.amazon.com/index.jsp Problems often get reported there first.

- If you still do not find evidence that the problem is AWS's fault, search the forums for your particular issue. Its likely that someone else has run into the same exact problem in the past.

- Still no luck? Ask your coworkers and/or post a question to the forums.

Run a Report to Know Who has Accessed What When

Use Elastic MapReduce to run a script on all our logs in the bucket logs.sagebase.org. There are some scripts in bucket emr.sagebase.org/scripts that will do the trick. If you want to change what they do, feel free to make new scripts.

Here is what a configured job looks like:

And here is some sample output from the job. Note that:

- All Sage employees will have their sagebase.org username as their IAM username

- Platform users register with an email address and we will use that email address as their IAM username.

- User

d9df08ac799f2859d42a588b415111314cf66d0ffd072195f33b921db966b440is the platform@sagebase.org user (also known as Brian Holt :-). In general, you should only see activity from that user when we are using BucketExplorer to manage our files in S3.arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:19:32:44 +0000] REST.GET.OBJECT human_liver_cohort/readme.txt arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:19:32:56 +0000] REST.GET.OBJECT human_liver_cohort/readme.txt arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:19:32:58 +0000] REST.GET.OBJECT human_liver_cohort/readme.txt arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:17:47:45 +0000] REST.GET.LOCATION - arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:23:42:17 +0000] REST.GET.LOGGING_STATUS - arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:23:42:19 +0000] REST.HEAD.OBJECT human_liver_cohort.tar.gz arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:19:32:40 +0000] REST.GET.BUCKET - arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:17:47:46 +0000] REST.GET.BUCKET - arn:aws:iam::325565585839:user/nicole.deflaux [17/Feb/2011:01:48:42 +0000] REST.GET.BUCKET - arn:aws:iam::325565585839:user/nicole.deflaux [17/Feb/2011:01:48:42 +0000] REST.GET.LOCATION - arn:aws:iam::325565585839:user/nicole.deflaux [17/Feb/2011:01:48:51 +0000] REST.HEAD.OBJECT mouse_model_of_sexually_dimorphic_atherosclerotic_traits.tar.gz arn:aws:iam::325565585839:user/nicole.deflaux [17/Feb/2011:01:48:51 +0000] REST.GET.ACL mouse_model_of_sexually_dimorphic_atherosclerotic_traits.tar.gz arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:23:42:17 +0000] REST.GET.ACL - arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:23:42:19 +0000] REST.GET.ACL human_liver_cohort.tar.gz arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:23:42:57 +0000] REST.HEAD.OBJECT mouse_model_of_sexually_dimorphic_atherosclerotic_traits.tar.gz arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:23:42:17 +0000] REST.GET.NOTIFICATION - arn:aws:iam::325565585839:user/nicole.deflaux [18/Feb/2011:23:42:57 +0000] REST.GET.ACL mouse_model_of_sexually_dimorphic_atherosclerotic_traits.tar.gz arn:aws:iam::325565585839:user/test [17/Feb/2011:01:55:44 +0000] REST.GET.OBJECT mouse_model_of_sexually_dimorphic_atherosclerotic_traits.tar.gz arn:aws:iam::325565585839:user/test [16/Feb/2011:23:13:42 +0000] REST.GET.OBJECT human_liver_cohort/readme.txt arn:aws:iam::325565585839:user/test [16/Feb/2011:23:22:02 +0000] REST.GET.OBJECT human_liver_cohort/expression/expression.txt d9df08ac799f2859d42a588b415111314cf66d0ffd072195f33b921db966b440 [16/Feb/2011:23:06:17 +0000] REST.HEAD.OBJECT human_liver_cohort/readme.txt d9df08ac799f2859d42a588b415111314cf66d0ffd072195f33b921db966b440 [16/Feb/2011:22:28:38 +0000] REST.GET.ACL - d9df08ac799f2859d42a588b415111314cf66d0ffd072195f33b921db966b440 [16/Feb/2011:22:39:57 +0000] REST.GET.LOCATION - d9df08ac799f2859d42a588b415111314cf66d0ffd072195f33b921db966b440 [16/Feb/2011:22:40:09 +0000] REST.COPY.OBJECT bxh_apoe/causality_result/causality_result_adipose_male.txt d9df08ac799f2859d42a588b415111314cf66d0ffd072195f33b921db966b440 [16/Feb/2011:22:40:16 +0000] REST.HEAD.OBJECT bxh_apoe/causality_result/causality_result_adipose_male.txt . . . Downloads per file: bxh_apoe/networks/BxH-ApoE_Brain_Male_batch_3_14_coexp-network.txt 2 bxh_apoe/networks/muscle_male-nodes.txt 2 bxh_apoe/networks/BxH-ApoE_Liver_Male_batch_3_4_coexp-log.txt 2 bxh_apoe/networks/SCN10_BxH-ApoE_Adipose_Male_batch_3_10_coexp.sif 2 bxh_apoe/networks/SCN19_muscle_female_bayesian.sif 2 human_liver_cohort/networks/deLiver_liver_all_adjusted_DE-Octave8_coexp-nodes.txt 2 bxh_apoe/networks/BxH-ApoE_Liver_Male_batch_3_4_coexp-annotation.txt 2 bxh_apoe/networks/BxH-ApoE_Brain_Male_batch_3_14_coexp-nodes.txt 2 bxh_apoe/networks/muscle_female-nodes.txt 2 human_liver_cohort/networks/QuickChip_female_bayesian-annotation.txt 2 . . . bxh_apoe/networks/liver_female_coexp-annotation.txt 2 bxh_apoe/networks/SCN15_BxH-ApoE_Muscle_Female_batch_1_11_coexp.sif 2 bxh_apoe/networks/SCN7_brain_female_bayesian.sif 2 human_liver_cohort/sage_bionetworks_user_agreement.pdf 5 bxh_apoe/phenotype/ 1 bxh_apoe/networks/SCN14_BxH-ApoE_Liver_Male_batch_3_4_coexp.sif 2 Downloads per user: arn:aws:iam::325565585839:user/nicole.deflaux 17 arn:aws:iam::325565585839:user/test 3 d9df08ac799f2859d42a588b415111314cf66d0ffd072195f33b921db966b440 931

Upload a dataset to S3

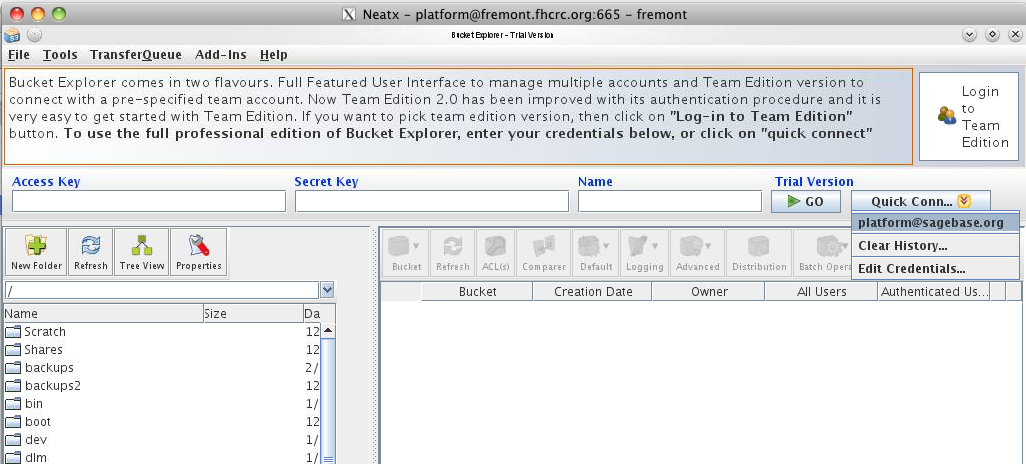

For the initial upload, a GUI tool called BucketExplorer (http://www.bucketexplorer.com/) is used. Uploads are done from the internal host fremont.fhcrc.org using the local access account 'platform', with the same password as the platform@sagebase.org account. The most efficient way to connect is to use an NX protocol client (http://www.nomachine.com/download.php) to get a virtual desktop as the user platform. Once connected the preconfigured BucketExplorer can be found in the application menu in the lower left corner of the screen.

Mac OSX Users I installed "NX Client for Mac OSX" but it complained that I was missing bin/nxssh and bin/nxservice. That stuff was not installed under Applications but instead under /Users/deflaux/usr/NX/

The initial datasets are stored in /work/platform/. This entire collection is mirrored exactly and can transfered by dragging and dropping into the data01.sagebase.org s3 bucket. This operation should be done as user platform, as all files should be readable by said user to facilitate the transfer.

BucketExplorer is very efficient, and will do hash comparisons and only transfer what files have changed. One can also get a visual comparison of what files have changed using the 'Comparer' button. During the transfer, the program will parallelize the transfer into 20 streams for very efficient use of outgoing bandwidth to the cloud.

Create a new IAM group

We are storing our access policies here: http://sagebionetworks.jira.com/source/browse/PLFM/trunk/configuration/awsIamPolicies

See the IAM documentation for more details about how to do this but its basically:

iam-groupcreate -g ReadOnlyUnrestrictedDataUsers iam-groupuploadpolicy -g ReadOnlyUnrestrictedDataUsers -p ReadOnlyUnrestrictedDataPolicy -f ~/platform/trunk/configuration/awsIamPolicies/ReadOnlyUnrestrictedDataPolicy.txt iam-groupadduser -u test -g ReadOnlyUnrestrictedDataUsers iam-grouplistusers -g ReadOnlyUnrestrictedDataUsers

Create a new user and add them to IAM groups

Note that this is for adding Sage employees to groups by hand. The repository service will take care of adding Web Client and R Client users to the right IAM group(s) after they sign a EULA for a dataset.

See the IAM documentation for more details about how to do this but its basically:

iam-usercreate -u bruce.hoff -g Admins -k -v > bruce.hoff_creds.txt

Then give the user their credentials file.

Per Brian, he recommends that we store them in our server home directory on beltown/fremont so that they are backed up. If you have the Sage root password, you can get your credential file from the backup location:

~>ssh ndeflaux@fremont ls -la /home/ndeflaux/PlatformIAMCreds total 40 drwxrwxr-x 2 ndeflaux FHCRC\domain^users 4096 2011-02-16 16:31 . drwxr-xr-x 30 ndeflaux FHCRC\domain^users 4096 2011-02-16 17:16 .. -r-------- 1 ndeflaux FHCRC\domain^users 126 2011-02-16 16:31 brian.holt_creds.txt -r-------- 1 ndeflaux FHCRC\domain^users 126 2011-02-16 16:31 bruce.hoff_creds.txt -r-------- 1 ndeflaux FHCRC\domain^users 129 2011-02-16 16:31 david.burdick_creds.txt -r-------- 1 ndeflaux FHCRC\domain^users 125 2011-02-16 16:31 john.hill_creds.txt -r-------- 1 ndeflaux FHCRC\domain^users 127 2011-02-16 16:31 mike.kellen_creds.txt -r-------- 1 ndeflaux FHCRC\domain^users 130 2011-02-16 16:31 nicole.deflaux_creds.txt -r-------- 1 ndeflaux FHCRC\domain^users 332 2011-02-16 16:31 platform_cred.txt -r-------- 1 ndeflaux FHCRC\domain^users 120 2011-02-16 16:31 test_creds.txt

Notes for the sake of posterity

Gotchas Getting Started with Beanstalk

Here are some gotchas I ran into when using beanstalk for the first time:

- I created a key pair in US West and was confused when I couldn't get beanstalk to use that key pair.

- Beanstalk is only in US East so you have to make and use a key pair from US East

- Get the key pair PlatformKeyPairEast from Nicole

- I could not ssh to my box even though I had the right key pair and the hostname.

- I needed to edit the default firewall setttings to open up port 22

- My serlvet didn't work right away and I wanted to look at stuff on disk.

- The servlet WAR is expanded under

/var/lib/tomcat6/webapps/ROOT/- If you want to save time (and a beanstalk deployment) you can overwrite that WAR with a new WAR if you want.

- The log files are here:

/var/log /var/log/tomcat6/monitor_catalina.log.lck /var/log/tomcat6/tail_catalina.log /var/log/tomcat6/tail_catalina.log.lck /var/log/tomcat6/monitor_catalina.log /var/log/httpd/error_log /var/log/httpd/access_log /var/log/httpd/elasticbeanstalk-access_log /var/log/httpd/elasticbeanstalk-error_log

- The servlet WAR is expanded under

- Error: java.lang.NoClassDefFoundError: javax/servlet/jsp/jstl/core/Config

- In a tomcat container, such as Elastic Beanstalk, you have to include jstl.jar manually, hence this entry.

<dependency> <groupId>javax.servlet</groupId> <artifactId>jstl</artifactId> <version>1.2</version> </dependency>

- In a tomcat container, such as Elastic Beanstalk, you have to include jstl.jar manually, hence this entry.

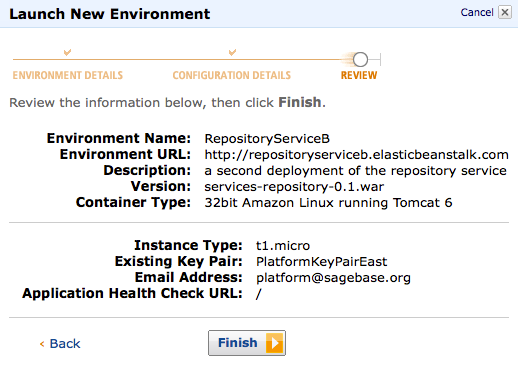

- Here's what your deployment might look like when things are working well: